Experiencing a New Approach to Reliability for Classroom Assessment

Session Type: Non-Webinar

Length: 4 hours

Abstract

Though they are fundamental to scientific measurement, it has been difficult to bring the concepts and techniques for determining reliability and validity into the realm of classroom assessment. This training session will be a hands-on introduction to a new approach for enhancing the reliability of assessments developed by classroom teachers. The technique can be used productively by educational researchers, evaluation specialists, test constructors, trainers and social scientists as well. The session will begin with participants experiencing a classroom assessment from the point of view of a student. They will then receive access and orientation to an online assessment information system that will be used to analyze and interpret assessment results. They will learn how the design of the assessment and the interpretation of results exemplifies a new approach to educational information based on practical learning goals and practical learning outcomes. Working at this level of information solves several longstanding problems associated with the determination and interpretation of reliability of classroom assessments. Participants will be given access the information system and its reliability feature to use in their own settings for one year after the session. Participants must bring computers to permit them to access the online assessment information system.

Summary

The difficulties in finding an approach to reliability that is practical and useful for teachers was highlighted in a special issue of Educational Measurement (Brookhart, 2003; Smith, 2003). A recent conceptual breakthrough suggests that the difficulties are associated with two features of conventional testing (Zachos & Doane, 2017). One is reliance on test scores that are composites derived from diverse learning outcomes. These don’t provide the information teachers need to improve instruction. Second, teachers often find the analytic technologies for determining reliability impractical and difficult to interpret (Parkes, 2013). This session demonstrates a way of working with discrete (unaggregated) learning outcomes that solves these problems.

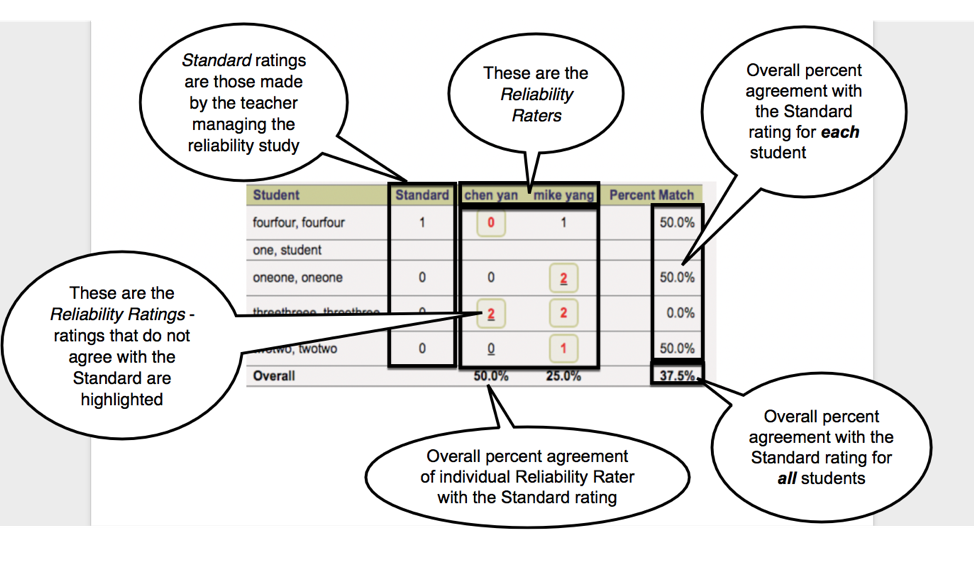

The workshop prepares participants to apply simple indicators of inter-rater agreement (Shepard, 2006) to produce information that can enhance curriculum and instruction as well as assessment. Figure 1 displays such information.

Figure 1. Inter-rater Agreement for a Discrete Learning Outcome

Figure 1. Inter-rater Agreement for a Discrete Learning Outcome

Twelve learning objectives, with operationally defined components, form the structural backbone of the training session. They are designed to move participants’ perspective from a focus on aggregate performance scores to engagement with discrete learning outcomes at a level of granularity appropriate to classroom teaching.

Participants are not expected to become skilled regarding these objectives in one training session. Rather, the ground will be set for grasping a practical approach to reliability that is appropriate for classroom assessment. Participants will rate the clarity and importance of the objectives at the beginning and end of the training session. These ratings will be used to reiterate the new approach to reliability, to evaluate the success of the session, and to stimulate a critical capstone conversation.

Lead presenter Paul Zachos worked for 35 years in primary and secondary schools and universities, and for twelve as a researcher and planner for the NYS Education Department. He provides workshops and assessment and evaluation services to schools, industry, and federal agencies. He holds a Ph.D. in Educational Psychology and Statistics from the University at Albany.

Co-presenter Monica De Tuya taught science and special education at the elementary, middle, and high school level. Currently, she serves as Director of Programs and Operations at ACASE and consults on training program development and evaluation in industry. She holds an M.S. in Secondary Education and is a Doctoral candidate in Information Science at the University at Albany.

Co-presenter Panpan Yang, a high school teacher of psychology in Linfen, China, is presently a Ph.D candidate in Educational Psychology and Methodology at the University at Albany.

Co-presenter Jason Brechko is a National Board-certified science teacher and a New York State Master Teacher. He teaches middle-school biology and physical science and has taught Earth Science, Astronomy, and Environmental Science at the high school level. Jason conducts workshops at regional and state-level conferences and has provided assessment and evaluation services to NASA-funded programs for teachers.

Schedule of proposed activities and topics to be covered during the proposed session timeline

- As a pre-assessment, the participants will rate the clarity and importance of the 12 Training Session Learning Objectives (see below).

- The Cubes & Liquids (C&L) assessment activity will be administered to participants.

- C&L will be deconstructed, via facilitated discussion, to illustrate a new approach to educational information based on intended learning outcomes specified at a critical level of granularity.

- Participants will be given access to and trained to use several features of ACASE’s online assessment information system.

- The information system’s reliability feature will be demonstrated.

- Session leaders will display sample results from their ratings of participant performance on C&L.

- Session leaders will demonstrate the reliability feature based on their ratings.

- Participants will conduct their own ratings of responses to C&L.

- Participants and session leaders will examine reports on the reliability of their ratings and learn how to apply the results to improve curriculum, assessment, instruction, and professional development.

- As a post-assessment, the participants will rate the clarity and importance of the 12 Training Session Learning Objectives (see below).

- The reliability feature of the AIS will be employed to review pre & post assessment results.

- Collaborative evaluation of the training session will be conducted.

12 Workshop Learning Objectives

- Uses the concept of the intended learning outcome to identify the end towards which all educational processes/activities are directed

- Uses the concept of the intended learning outcome to distinguish assessment from the other essential features of educational processes/activities

- Uses the concept of the intended learning outcome to distinguish instruction from the other essential features of educational processes/activities

- Applies the concept of the critical level of specificity to educational planning and decision-making, i.e., distinguishes broad learning goals from those specified at a level appropriate for lessons and units of instruction

- Applies the concept of core capabilities to educational planning and decision-making

- Applies the distinction between domains of learning to educational planning and decision-making

- Distinguishes educational assessment from testing and grading

- Distinguishes educational assessment from educational evaluation

- Brings concern for the notion of validity to challenges and problems in assessment and evaluation

- Brings concern for the notion of reliability to challenges and problems in assessment and evaluation

- Can apply assessment information to evaluate educational activities

- Can apply assessment information to build community

Works Cited

Brookhart, S. M. (2003). Developing measurement theory for classroom assessment purposes and uses. Educational Measurement: Issues and Practice, 22 (4), 5–12.

Parkes, J. (2013). Reliability in classroom assessment. In J. McMillan (Ed.), SAGE Handbook of Research on Classroom Assessment. Sage.

Shepard, L. A. (2006). Classroom assessment. Educational Measurement, 4, 623–646.

Smith, J. K. (2003). Reconsidering reliability in classroom assessment and grading. Educational Measurement: Issues and Practice, 22 (4), 26–33.

Zachos, P., & Doane, W. (2017). Knowing the Learner: A New Approach to Educational Information. Shires Press.